Smartphone Position Detection Using Neural Networks

This project demonstrates an approach to detect common smart phone positions, including “In Hand”, “Side Pocket”, “In Handbag”, and “On Table (Idle)”. We apply sensor data fusion on a set of hardware- and software-based Android sensors. As a result, data entries containing sensor fusion features are classified individually, without looking at patterns. The overall system design and accuracy are presented using a Google Nexus 6 device.

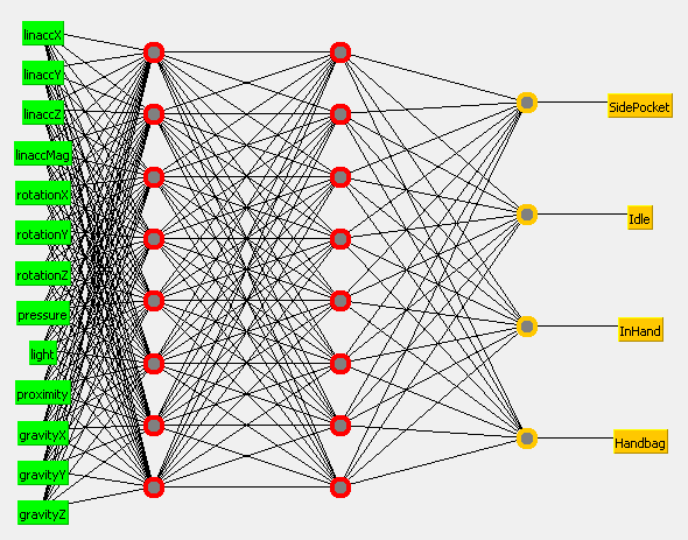

The fully connected NN used for classification. Nodes: 13 - 8 - 8 - 4

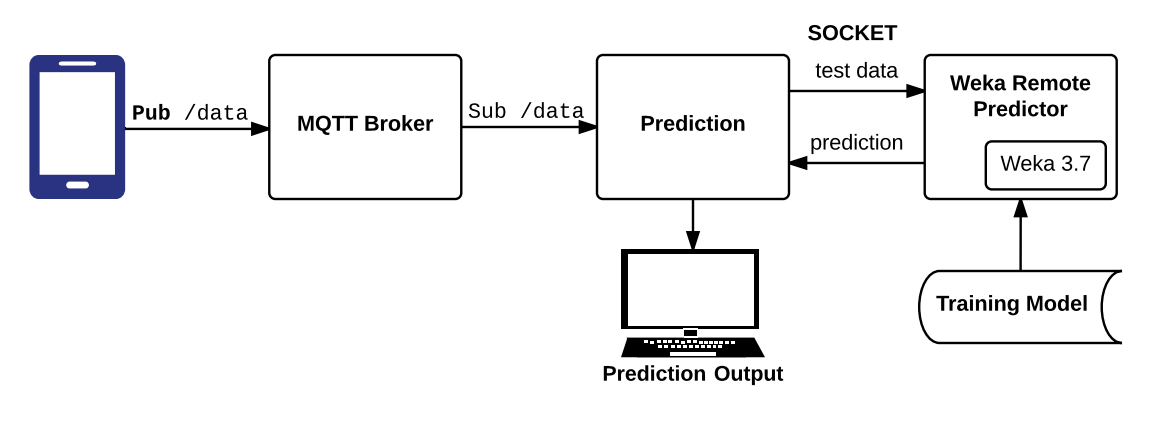

Prediction system diagram. Remote prediction using Weka.

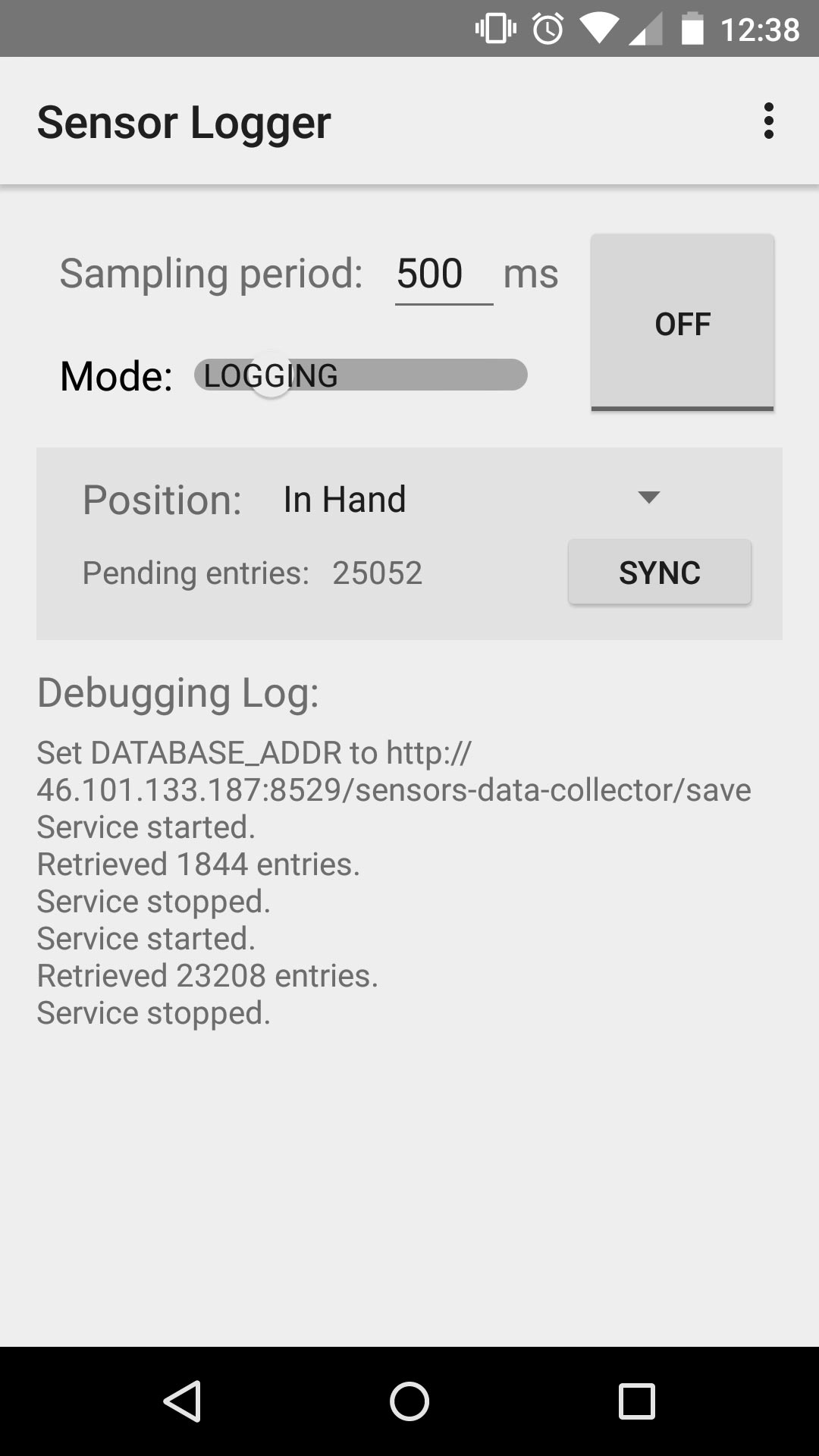

Android Application: Logging mode

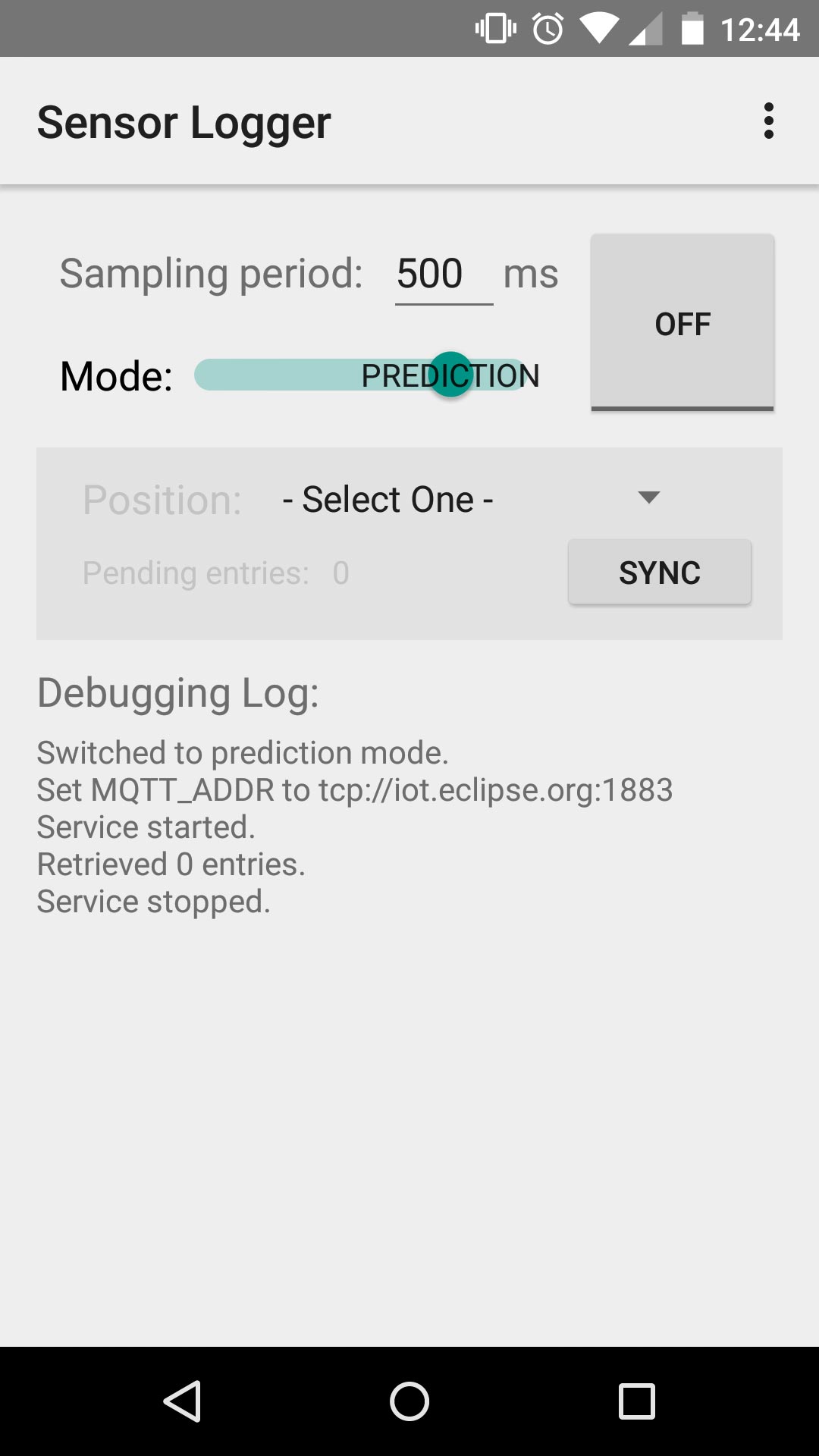

Android Application: Prediction mode

Source Code

https://github.com/farshidtz/mss2015

Talk

Other contributors:

Omar Al-Safi